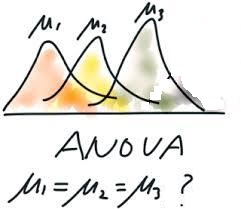

7.2: The one way ANOVA

For example, consider sa follows.

$(\sharp_1)$

A data: $x_{11}, x_{12}, x_{13},..., x_{1n_1}$

is obtained fron the normal distribution $N(\mu_1, \sigma)$

$(\sharp_2)$

A data: $x_{21}, x_{22}, x_{23},..., x_{2n_2}$

is obtained fron the normal distribution $N(\mu_2, \sigma)$

| $(\sharp_3)$ | A data: $x_{31}, x_{32}, x_{33},..., x_{3n_3}$ is obtained fron the normal distribution $N(\mu_3, \sigma)$ |

How should we answer it?

For each $i=1,2, \cdots , a$, a natural number $n_i$ is determined. And put, ${{n}}=\sum_{i=1}^a n_i$. Consider the parallel simultaneous normal observable ${\mathsf O}_G^{{{n}}} = (X(\equiv {\mathbb R}^{{{n}}}), {\mathcal B}_{\mathbb R}^{{{n}}}, {{{G}}^{{{n}}}} )$ ( in $L^\infty (\Omega ( \equiv ({\mathbb R}^a \times {\mathbb R}_+))$ ) such that

\begin{align} & [{{{G}}}^{{{n}}} ( \widehat{\Xi}) ] (\omega) = \frac{1}{({{\sqrt{2 \pi }\sigma{}}})^{{{n}}}} \underset{\widehat{\Xi} }{\int \cdots \int} \exp[{}- \frac{\sum_{i=1}^a \sum_{k=1}^{n_i} ({}{x_{ik}} - {}{\mu}_i )^2 } {2 \sigma^2} {}] \times_{i=1}^a \times_{k=1}^{n_i} d {}{x_{ik}} \tag{7.14} \\ & \qquad ( \forall \omega =(\mu_1, \mu_2, \ldots, \mu_a, \sigma) \in \Omega = {\mathbb R}^a \times {\mathbb R}_+ , \widehat{\Xi} \in {\mathcal B}_{\mathbb R}^{{{n}}}) \nonumber \end{align}That is,consider

\begin{align} {\mathsf M}_{L^\infty ({\mathbb R}^a \times {\mathbb R}_+ )} ({\mathsf O}_G^{{{n}}} = (X(\equiv {\mathbb R}^{{{n}}}), {\mathcal B}_{\mathbb R}^{{{n}}}, {{{G}}^{{{n}}}} ), S_{[(\mu=(\mu_1, \mu_2, \cdots, \mu_a ), \sigma )]} ) \end{align}Put $a_i$ as follows.

\begin{align} \alpha_i= \mu_i - \frac{\sum_{i=1}^a \mu_i }{a} \qquad (\forall i=1,2, \ldots, a ) \tag{7.15} \end{align}and put,

\begin{align} \Theta = {\mathbb R}^a \nonumber \end{align}Thus,, the system quantity $\pi : \Omega \to \Theta $ is defined as follows.

\begin{align} \Omega = {\mathbb R}^a \times {\mathbb R}_+ \ni \omega =(\mu_1, \mu_2, \ldots, \mu_a, \sigma) \mapsto \pi(\omega) = (\alpha_1, \alpha_2, \ldots, \alpha_a) \in \Theta = {\mathbb R}^a \tag{7.16} \end{align}Define the null hypothesis $H_N ( \subseteq \Theta = {\mathbb R}^a)$ as follows.

\begin{align} H_N & = \{ (\alpha_1, \alpha_2, \ldots, \alpha_a) \in \Theta = {\mathbb R}^a \;:\; \alpha_1=\alpha_2= \ldots= \alpha_a= \alpha \} \nonumber \\ & = \{ ( \overbrace{0, 0, \ldots, 0}^{a} ) \} \tag{7.17} \end{align}Here,note the following equivalence:

\begin{align} "\mu_1=\mu_2=\ldots=\mu_a" \Leftrightarrow "\alpha_1=\alpha_2=\ldots=\alpha_a=0" \Leftrightarrow "\mbox{(7.17)}" \end{align}Hence, our problem is as follows.

Put $n=\sum_{i=1}^a n_i$. Consider the parallel simultaneous normal measurement $ {\mathsf M}_{L^\infty ({\mathbb R}^a \times {\mathbb R}_+ )} ({\mathsf O}_G^{{{n}}} = (X(\equiv {\mathbb R}^{{{n}}}),$ $ {\mathcal B}_{\mathbb R}^{{{n}}}, $ ${{{G}}^{{{n}}}} ),$ $ S_{[(\mu=(\mu_1, \mu_2, \cdots, \mu_a ), \sigma )]} ) $ Here, assume that

\begin{align} \mu_1= \mu_2= \cdots= \mu_a \end{align}that is,

\begin{align} \pi(\mu_1, \mu_2, \cdots, \mu_a )=(0,0, \cdots, 0) \end{align}Namely, assume that the null hypothesis is $H_N=\{ (0,0, \cdots, 0) \}$ $(\subseteq \Theta= {\mathbb R} ) )$. Consider $0 < \alpha \ll 1$.

Then, find the largest ${\widehat R}_{{H_N}}^{\alpha; \Theta}( \subseteq \Theta)$ (independent of $\sigma$) such that

| $(B_1):$ | the probability that a measured value $x(\in{\mathbb R}^n )$ (obtained by $ {\mathsf M}_{L^\infty ({\mathbb R}^a \times {\mathbb R}_+ )} ({\mathsf O}_G^{{{n}}} = (X(\equiv {\mathbb R}^{{{n}}}), {\mathcal B}_{\mathbb R}^{{{n}}}, {{{G}}^{{{n}}}} ), S_{[(\mu=(\mu_1, \mu_2, \cdots, \mu_a ), \sigma )]} ) $) satisfies \begin{align} E(x) \in {\widehat R}_{{H_N}}^{\alpha; \Theta} \end{align} is less than $\alpha$. |

Answer. Consider the weighted Euclidean norm $\| \theta^{(1)}- \theta^{(2)} \|_\Theta$ in $\Theta={\mathbb R}^a$ as follows.

\begin{align} & \| \theta^{(1)}- \theta^{(2)} \|_\Theta = \sqrt{ \sum_{i=1}^a n_i \Big(\theta_{i}^{(1)} - \theta_{i}^{(2)} \Big)^2 } \nonumber \\ & \qquad (\forall \theta^{(\ell)} =( \theta_1^{(\ell)}, \theta_2^{(\ell)}, \ldots, \theta_a^{(\ell)} ) \in {\mathbb R}^{a}, \; \ell=1,2 ) \nonumber \end{align}Also, put

\begin{align} &X={\mathbb R}^{{{n}}} \ni x = ((x_{ik})_{ k=1,2, \ldots, n_i})_{i=1,2,\ldots,a} \nonumber \\ & x_{i \bullet} =\frac{\sum_{k=1}^{n_i} x_{ik}}{n_i}, \qquad x_{ \bullet \bullet } =\frac{\sum_{i=1}^a \sum_{k=1}^{n_i}x_{ik}}{{{n_i}}}, \quad \tag{7.18} \end{align}Theorem 5.6 (Fisher's maximum likelihood method) urges us to define and calculate $\overline{\sigma}(x) (= \sqrt{ \frac{{\overline{SS}}(x)}{n} } )$ as follows.

For $x \in X={\mathbb R}^{{{n}}}$,

\begin{align} & {\overline{SS}}(x) = {\overline{SS}}(((x_{ik})_{\; k=1,2, \ldots, {n_i} })_{i=1,2, \ldots, a\;} ) \nonumber \\ = & \sum_{i=1}^a \sum_{k=1}^{n_i} (x_{ik} - x_{i \bullet})^2 \nonumber \\ = & \sum_{i=1}^a \sum_{k=1}^{n_i} (x_{ik} - \frac{\sum_{k=1}^{n_i} x_{i k}}{n_i})^2 \nonumber \\ = & \sum_{i=1}^a \sum_{k=1}^{n_i} ((x_{ik}-\mu_i) - \frac{\sum_{k=1}^{n_i} ( x_{i k}-\mu_i)}{n_i})^2 \qquad \nonumber \\ = & {\overline{SS}}(((x_{ik}- \mu_{i})_{\; k=1,2, \ldots, {n_i} })_{i=1,2, \ldots, a\;} ) \tag{7.19} \end{align}For each $x \in X = {\mathbb R}^{{{n}}}$, define the semi-norm $d_\Theta^x$ in $\Theta$ such that

\begin{align} & d_\Theta^x (\theta^{(1)}, \theta^{(2)}) = \frac{\|\theta^{(1)}- \theta^{(2)} \|_\Theta}{ \sqrt{{\overline{SS}}(x) } } \qquad (\forall \theta^{(1)}, \theta^{(2)} \in \Theta ) ). \tag{7.20} \end{align}Furthermore,define the estimator $E: X(={\mathbb R}^{{{n}}}) \to \Theta(={\mathbb R}^{a} )$ as follows.

\begin{align} & E(x) = E( (x_{ik})_{i=1,2,\ldots,a, k=1,2, \ldots, n} ) \nonumber \\ = & \Big( \frac{\sum_{k=1}^{n_i} x_{1k}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}} , \frac{\sum_{k=1}^{n_i} x_{2k}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}}, \ldots, \frac{\sum_{k=1}^{n_i} x_{ak}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}} \Big) \nonumber \\ = & \Big( \frac{\sum_{k=1}^{n_i} x_{ik}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}} \Big)_{i=1,2, \ldots, a } = (x_{i \bullet} - x_{\bullet \bullet })_{i=1,2, \ldots, a } \tag{7.21} \end{align}Thus,we get

\begin{align} & \| E(x) - \pi (\omega )\|^2_\Theta \nonumber \\ = & || \Big( \frac{\sum_{k=1}^{n_i} x_{ik}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}} \Big)_{i=1,2, \ldots, a } - ( \alpha_i )_{i=1,2, \ldots, a } ||_\Theta^2 \nonumber \\ = & || \Big( \frac{\sum_{k=1}^{n_i} x_{ik}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}} - (\mu_i - \frac{\sum_{i=1}^a \mu_i }{a}) \Big)_{i=1,2, \ldots, a } ||_\Theta^2 \nonumber \end{align}remarking the null hypothesis $H_N$ (i.e., $\mu_i-\frac{\sum_{k=1}^a\mu_i}{a}=\alpha_i =0 (i=1,2,\ldots, a )$),

\begin{align} = & || \Big( \frac{\sum_{k=1}^{n_i} x_{ik}}{n} - \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} x_{ik}}{{{n}}} \Big)_{i=1,2, \ldots, a } ||_\Theta^2 = \sum_{i=1}^a n_i (x_{i \bullet} - x_{\bullet \bullet })^2 \tag{7.22} \end{align}Therefore, for any $ \omega=((\mu_{ik})_{i=12,\ldots,a, \;k=1,2, \ldots, n }, \sigma ) (\in \Omega= {\mathbb R}^{{{n}}} \times {\mathbb R}_+ )$, define the positive real $\eta^\alpha_{\omega}$ $(> 0)$ such that

\begin{align} \eta^\alpha_{\omega} = \inf \{ \eta > 0: [G^{{{n}}}(E^{-1} ( {{ Ball}^C_{d_\Theta^{x}}}(\pi(\omega) ; \eta))](\omega ) \ge \alpha \} \tag{7.23} \end{align} where \begin{align} { Ball}^C_{d_\Theta^{x}}(\pi(\omega) ; \eta) =\{ \theta \in \Theta \;:\; d_\Theta^{x} ( \pi(\omega ) , \theta ) > \eta \} \tag{7.24} \end{align}Recalling the null hypothesis $H_N$ (i.e., $\mu_i-\frac{\sum_{k=1}^a\mu_i}{a}=\alpha_i =0 (i=1,2,\ldots, a )$), calculate $\eta^\alpha_{\omega}$ as follows.

\begin{align} & E^{-1}({{ Ball}^C_{d_\Theta^{x} }}(\pi(\omega) ; \eta )) =\{ x \in X = {\mathbb R}^{{{n}}} \;:\; d_\Theta^x (E(x), \pi(\omega )) > \eta \} \nonumber \\ = & \{ x \in X = {\mathbb R}^{{{n}}} \;:\; \frac{ \| E(x)- \pi(\omega) \|^2_\Theta }{{{\overline{SS}}(x) }} = \frac{ \sum_{i=1}^a n_i ( x_{i \bullet} - x_{\bullet \bullet} )^2}{ \sum_{i=1}^a \sum_{k=1}^{n_i} (x_{ik} - x_{i \bullet})^2 } > \eta^2 \} \tag{7.25} \end{align}For any $\omega =(\mu_1, \mu_2, \ldots, \mu_a, \sigma) \in \Omega={\mathbb R}^{a} \times {\mathbb R}_+$ such that $\pi( \omega )$ $ (= (\alpha_1, \alpha_2, \ldots, \alpha_a) )\in H_N$ $ (=\{0,0, \ldots, 0)\})$, we see

\begin{align} & [{{{G}}}^{{{n}}} ( E^{-1}({{ Ball}^C_{d_\Theta^{x} }}(\pi(\omega) ; \eta )) ) (\omega) \nonumber \\ = & \frac{1}{({{\sqrt{2 \pi }\sigma{}}})^{{{n}}}} \underset{ \frac{ \sum_{i=1}^a n_i ( x_{i \bullet} - x_{\bullet \bullet} )^2}{ \sum_{i=1}^a \sum_{k=1}^{n_i} (x_{ik} - x_{i \bullet})^2 } > \eta^2 }{\int \cdots \int} \exp[{}- \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} ({}{x_{ik}} - {}{\mu_i} )^2 } {2 \sigma^2} {}] \times_{i=1}^a \times_{k=1}^{n_i} d {}{x_{ik}} \nonumber \\ = & \frac{1}{({{\sqrt{2 \pi }{}}})^{{{n}}}} \underset{ \frac{ (\sum_{i=1}^a n_i( x_{i \bullet} - x_{\bullet \bullet} )^2 /(a-1)}{ (\sum_{i=1}^a \sum_{k=1}^{n_i} (x_{ik} - x_{i \bullet})^2)/({{n}}-a) } > \frac{\eta^2 ({{n}}-a) }{ (a-1)} } {\int \cdots \int} \exp[{}- \frac{ \sum_{i=1}^a \sum_{k=1}^{n_i} ({}{x_{ik}} )^2 } {2 } {}] \times_{i=1}^a \times_{k=1}^{n_i} d {}{x_{ik}} \nonumber \end{align}(B$_2$): by the formula of Gauss integrals (Formula 7.8 (B)( in $\S$7.4)), we see

\begin{align} = & \int^{\infty}_{ \frac{\eta^2 ({{n}}-a) }{ (a-1)} } p_{(a-1,{{n}}-a) }^F(t) dt = \alpha \;\; (\mbox{ e.g., $\alpha$=0.05}) \tag{7.26} \end{align}where, $p_{(a-1,{{n}}-a) }^F$ is a probability density function of the $F$-distribution with $p_{(a-1,{{n}}-a) }^F$ degree of freedom. Therefore,it suffices to solve the following equation

\begin{align} \frac{\eta^2 ({{n}}-a) }{ (a-1)} ={F_{n-a, \alpha}^{a-1} } (=\mbox{"$\alpha$-point"}) \tag{7.27} \end{align}This is solved,

\begin{align} (\eta^\alpha_{\omega})^2 = {F_{n-a, \alpha}^{a-1} } (a-1)/(n-a) \tag{7.28} \end{align}Then, we get ${\widehat R}_{\widehat{x}}^{\alpha; \Theta}$ (or, ${\widehat R}_{\widehat{x}}^{\alpha; X}$; the $(\alpha)$ rejection region of $H_N =\{(0.0. \ldots, 0)\}( \subseteq \Theta= {\mathbb R}^a)$ ) as follows:

\begin{align} {\widehat R}_{{H_N}}^{\alpha; \Theta} & = \bigcap_{\omega =((\mu_i)_{i=1}^a, \sigma ) \in \Omega (={\mathbb R}^a \times {\mathbb R}_+ ) \mbox{ such that } \pi(\omega)= (\mu)_{i=1}^a \in {H_N}=\{(0,0,\ldots,0)\}} \{ E({x}) (\in \Theta) : d_\Theta^{x} (E({x}), \pi(\omega)) \ge \eta^\alpha_{\omega } \} \nonumber \\ & = \{ E({x}) (\in \Theta) : \frac{ (\sum_{i=1}^a n_i ( x_{i \bullet} - x_{\bullet \bullet} )^2) /(a-1)}{ (\sum_{i=1}^a \sum_{k=1}^{a_i} (x_{ik} - x_{i \bullet })^2))/({{n}}-a) } \ge {F_{n-a, \alpha}^{a-1} } \} \tag{7.29} \end{align} Thus, \begin{align} {\widehat R}_{\widehat{x}}^{\alpha; X} = E^{-1}({\widehat R}_{H_N}^{\alpha;\Theta}) = \{ x \in X \;:\; { \frac{ (\sum_{i=1}^a n_i ( x_{i \bullet} - x_{\bullet \bullet} )^2 )/(a-1)}{ (\sum_{i=1}^a \sum_{k=1}^{n_i} (x_{ik} - x_{i \bullet})^2)/({{n}}-a) } \ge {F_{n-a, \alpha}^{a-1} } } \} \tag{7.30} \end{align}| $\fbox{Note 7.2}$ | It should be noted that the mathematical part is only the (B$_2$). |